Production-Ready Carrier API Test Harnesses: Why 73% of Sandbox-Verified Integrations Fail in Live Traffic and How to Build Testing That Actually Predicts Real-World Behavior

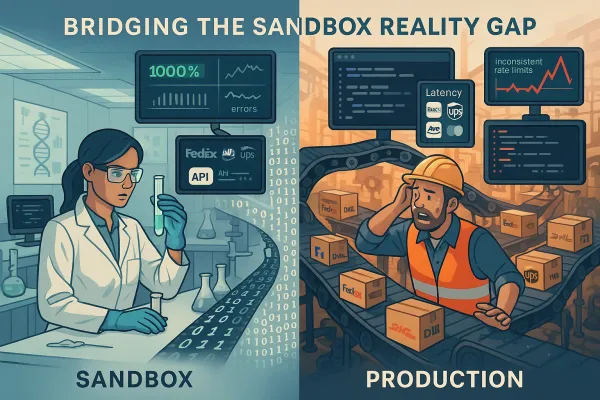

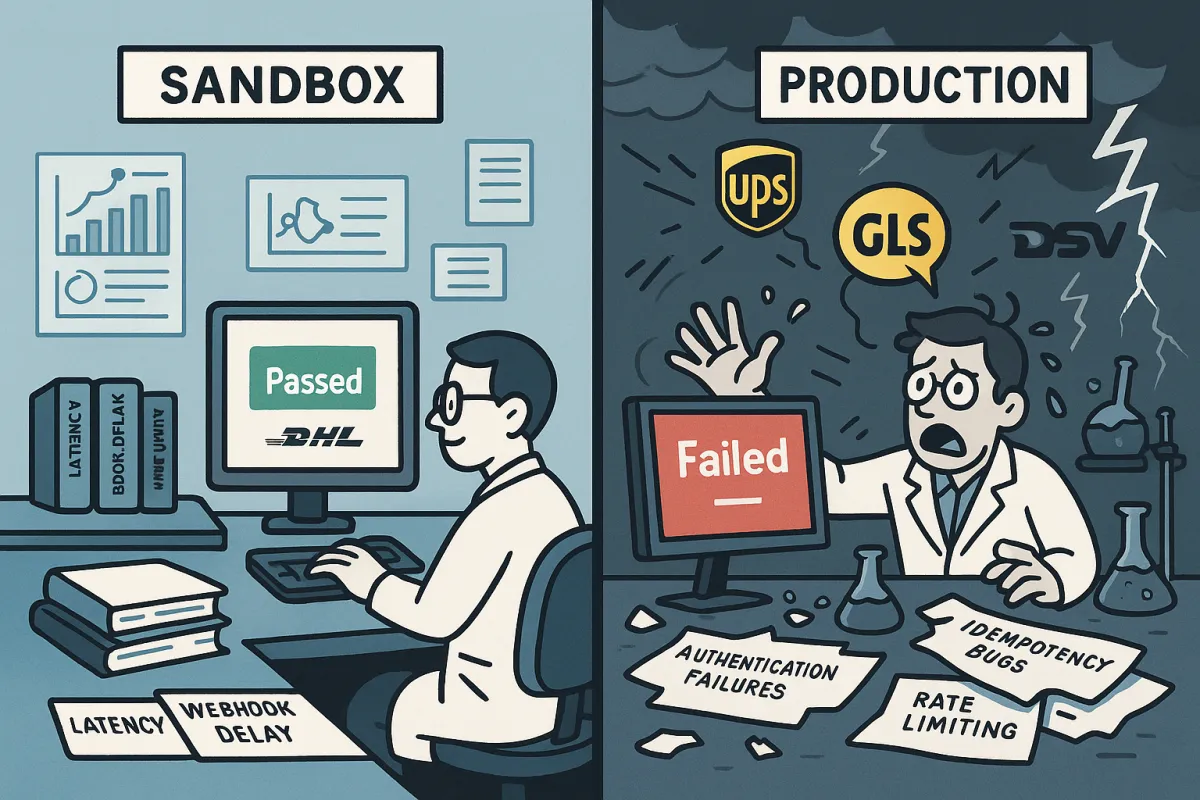

Your integration passed sandbox testing with flying colors. The webhook endpoints responded perfectly. Rate requests returned clean responses. Authentication flows worked without a single error. Then you deployed to production and discovered what 73% of integration teams learn the hard way: production authentication failures within weeks of carrier API deployments that sailed through sandbox testing.

72% of implementations face reliability issues within their first month of production deployment. API downtime jumped from 34 minutes to 55 minutes weekly between Q1 2024 and Q1 2025, while the underlying cause isn't mysterious. Carrier API testing that mimics production reality requires understanding failure patterns that sandbox environments systematically hide.

The Sandbox Deception: Why Perfect Test Results Become Production Disasters

The USPS API migration exposes this reliability gap perfectly. The new 60-calls-per-hour limit essentially provides one validation request per minute, making workflows that previously handled 6,000 times faster impossible. The legacy API handled roughly 6,000 requests per minute without throttling, replaced by 60 requests per hour—a 6,000x reduction.

Your test environment validated perfect responses against a handful of requests. Production reality hits when your retry logic creates thundering herd problems, when webhook delays stack up during peak hours, or when failover systems simultaneously hit the same backup carrier. When your system tries creating 200 labels in rapid succession or validating hundreds of addresses for large shipments, carriers like DHL start with basic limits of 250 calls per day with maximum 1 call every 5 seconds before requiring approval for higher thresholds.

Authentication Cascade Failures That Sandboxes Miss

The most insidious failure pattern involves token refresh logic breaking down under load, manifesting as intermittent 401 responses during peak traffic periods, particularly affecting OAuth token refresh operations. 54% of attacks observed relate to security misconfigurations, with developers struggling to properly configure authentication flows across multiple carrier environments.

Teams test successfully in sandbox environments where security constraints are relaxed, then deploy to production where full authentication requirements create unexpected failure points. The gap emerges from scope permissions, token validation, and session management complexities that only surface under concurrent load.

Enterprise platforms like Cargoson, MercuryGate, and nShift handle these authentication complexities through abstraction layers, but direct integrations need specific testing for token refresh timing under production load patterns.

Building Test Harnesses That Mirror Production Reality

DHL's test environment limits you to 500 service invocations daily, but their production thresholds operate differently. Your test harness needs time-based scenarios that simulate peak processing windows, bulk operations that trigger cascading failures, and carrier maintenance windows that expose failover logic weaknesses.

Different carriers implement throttling mechanisms distinctly. When FedEx, DHL, and UPS APIs all throttle simultaneously during Black Friday volume, theoretical improvements from rate limiting disappear fast. Your testing framework needs carrier-specific failure simulation that accounts for sliding windows versus hard blocks, burst allowances versus sustained rates.

The testing approach must include authentication stress testing, bulk processing scenario validation, and multi-carrier failover logic under simultaneous rate limiting. Most teams test happy path scenarios with isolated requests. Real production breaks happen during coordinated failure scenarios.

Rate Limiting Test Patterns by Carrier

Each carrier implements rate limiting differently, requiring specific test patterns. At 60 requests per hour, a mid-size Shopify store doing 200 orders daily will exceed the rate limit during peak hours, while address validation, rate shopping, label creation, and tracking all share the same quota.

DHL provides 250 calls daily with 5-second intervals before approval requirements. UPS OAuth 2.0 transitions created specific authentication timing requirements. FedEx SOAP retirement introduces payload mapping complexities that surface under volume pressure.

Your test harness should generate request patterns that approach these limits progressively, then simulate recovery scenarios when carriers implement exponential backoff requirements. When DHL returns a 429, your system should implement exponential backoff with jitter, not immediately failover to backup carriers.

Production Failure Mode Testing Framework

Between Q1 2024 and Q1 2025, average API uptime fell from 99.66% to 99.46%, resulting in 60% more downtime affecting European shippers trying to maintain reliable multi-carrier integrations during peak season. Your testing framework needs scenarios that replicate these compound failure modes.

Data validation failure rates exceeding 5% create critical functionality gaps. When DHL's API returns 200 OK but the response contains an empty tracking array, your monitoring should flag this as functional failure, not success. Migration downtime scenarios require testing primary carrier rate limit states alongside backup carrier transition logic.

Enterprise platforms like EasyPost, ShipEngine, Cargoson, and Descartes handle these transitions more gracefully through abstraction layers, but direct integrations need custom fallback logic that accounts for simultaneous carrier constraints.

Monitoring Indicators That Predict Failures

Dynamic rate limiting can cut server load by up to 40% during peak times while maintaining availability, but monitoring must track leading indicators beyond simple response codes. Request volume surges that approach rate limits, error rates trending toward 5% thresholds, and response times crossing 500ms baselines signal impending failures.

Proper rate limit detection monitors request patterns leading up to 429 responses, implementing sliding window monitoring that tracks requests per carrier over multiple time periods. A sudden spike in 429s indicates misconfigured batch jobs, while gradual rate limit increases suggest organic traffic growth requiring infrastructure adjustments.

Real-World Test Case Library

Document specific failure scenarios from recent carrier migration experiences. USPS Web Tools API Versions 1 & 2 were retired in January 2025, with Version 3 following in January 2026. FedEx's remaining SOAP-based endpoints will be fully retired in June 2026.

Black Friday traffic scenarios expose cascading failures that simple rate limit headers don't reveal. A batch job that previously ran in 10 minutes now takes 100 hours, creating permanent architectural constraints that production systems must account for.

Beyond volume testing, your scenarios need webhook amnesia simulation where platforms accept events successfully but fail to deliver 12-18% of notifications during traffic spikes. These silent failures prove particularly dangerous because application logs show successful webhook registrations while downstream systems never receive updates.

Recovery Testing Scenarios

Circuit breaker patterns prevent cascading failures when carrier endpoints go down by tracking error rates and response times, automatically stopping requests when thresholds are exceeded, and periodically testing if services have recovered. Your recovery testing must include throttling implementation scenarios, delayed request processing through queue systems, and multi-carrier fallback validation.

Platforms implementing abstraction layers like Cargoson, MercuryGate, and BluJay provide circuit breaker patterns, but direct integrations need custom implementation that accounts for carrier-specific recovery characteristics.

Implementation Guide: From Sandbox Success to Production Confidence

Start with carrier-specific rate limiting simulation that mirrors production constraints. Build authentication stress testing that validates token refresh timing under concurrent load. Implement bulk processing scenarios that trigger the cascading failures your production environment will face.

Your test harness architecture should include endpoint availability monitoring, response validation checking for expected data structures, business logic validation ensuring rate responses include actual pricing, and dependency health monitoring for upstream services affecting API behavior.

Integration with platforms like Cargoson, MercuryGate, and BluJay provides abstraction layer benefits that reduce direct carrier integration complexity. Platforms like EasyPost, ShipEngine, Cargoson, and nShift handle complexity through aggregation, but high-volume operations with custom requirements might justify direct carrier integrations despite reliability challenges.

The investment strategy prioritizes infrastructure over features. Your monitoring architecture needs endpoint availability, response validation, business logic validation, and dependency health monitoring. Production-predictive testing requires comprehensive failure mode simulation before your customers discover the gaps that sandbox testing missed.