Carrier API Cascade Detection: Building Monitoring That Prevents Multi-Carrier Domino Failures Before They Kill Your Shipping Operations

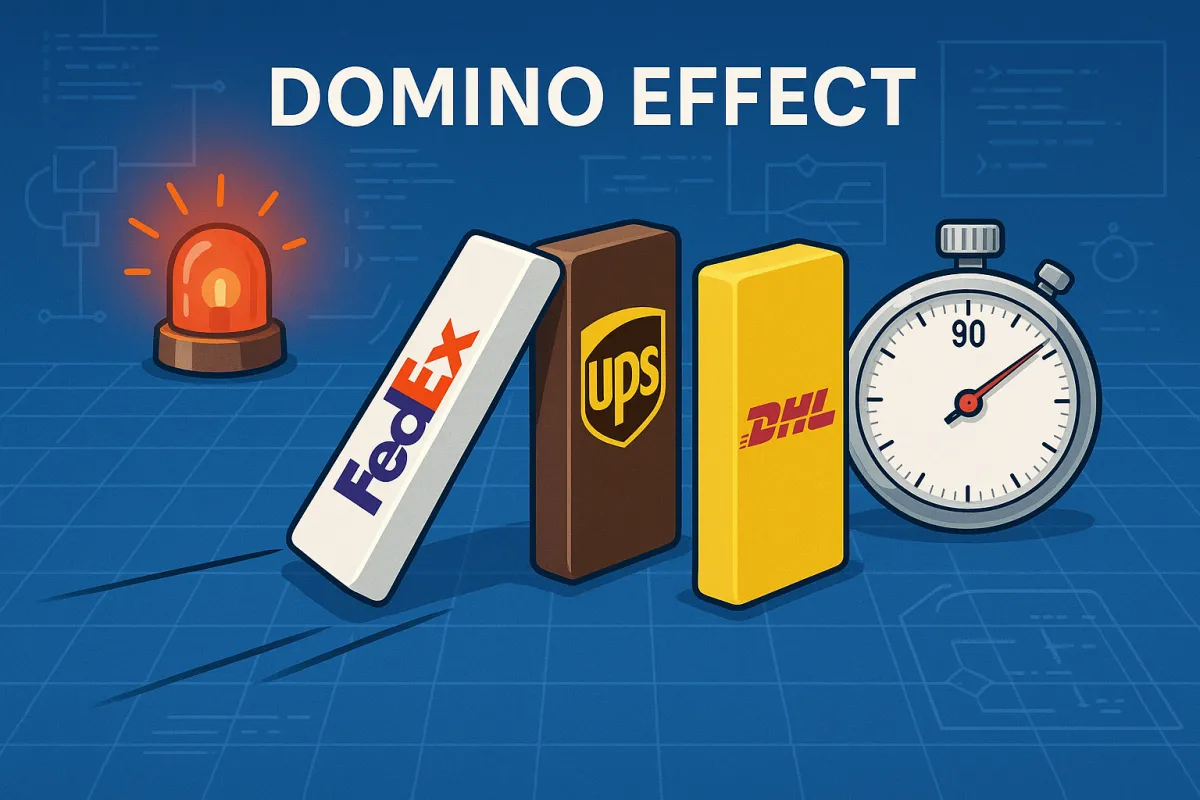

Your shipping operation faces an escalating threat that traditional monitoring systems can't catch. Between Q1 2024 and Q1 2025, average API uptime fell from 99.66% to 99.46%, resulting in 60% more downtime year-over-year. But here's what makes this crisis particularly dangerous for multi-carrier operations: FedEx rate limits trigger failover to UPS, which then hits its limits and fails over to DHL, creating a "carrier domino effect" that exhausts all available options within 90 seconds.

This isn't theoretical. Average weekly API downtime rose from 34 minutes in Q1 2024 to 55 minutes in Q1 2025, while your cascade detection systems remain fundamentally unprepared for the reliability patterns now dominating carrier integration failures.

The Multi-Carrier Cascade Crisis

October 2025 revealed the true scope of this problem. When AWS infrastructure issues triggered a domino effect, platforms like ShipEngine and ShipStation didn't fail alone. When FedEx, DHL, and UPS APIs all throttle simultaneously during Black Friday volume, those theoretical improvements disappear fast. The cascading failures that followed exposed a fundamental gap: most monitoring systems can detect individual carrier failures but miss the critical moments when multiple carriers fail in sequence.

The mathematics are stark. Logistics saw the sharpest decline in API uptime as providers expanded their digital ecosystems to meet rising demand for real-time tracking, inventory updates, and third-party platform integrations. This rapid growth increased reliance on external APIs across warehousing, transport, and delivery networks — amplifying the risk of downtime from system overloads, partner outages, and inconsistent monitoring practices.

Your traditional uptime monitoring checks individual endpoints every few minutes. That approach completely misses the 90-second window where cascading rate limits destroy your entire shipping capacity. When UPS hits its limit at 11:47 AM, your system routes traffic to FedEx, which hits its own limit by 11:48 AM, forcing a final failover to DHL that exhausts your daily quota by 11:49 AM.

Sound familiar? You're not alone. Between Q1 2024 and Q1 2025, average API uptime fell from 99.66% to 99.46%, resulting in 60% more downtime year-over-year. That's not just statistics—it's production reality hitting European shippers trying to maintain reliable multi-carrier integrations during peak season.

Anatomy of a Carrier Domino Effect

Real cascade patterns follow predictable sequences that your monitoring can anticipate. Three months of continuous monitoring revealed failure patterns that don't appear in vendor documentation. When a cache expires, thousands of simultaneous requests hit the database at once. This overwhelms warehouse management APIs, so that the entire logistics system integration slows down. The "thundering herd" problem becomes exponentially worse in multi-carrier environments.

The authentication cascade represents the most insidious failure pattern. The most insidious failure pattern involved token refresh logic breaking down under load. When La Poste's API started returning 401 errors for previously valid tokens, most monitoring systems classified this as a temporary authentication issue. But the real problem was more complex. Their OAuth implementation couldn't handle concurrent refresh requests from the same client. If your system made simultaneous calls during token expiry, you'd get a mix of successful authentications and failures.

Token bucket implementations create additional vulnerability during cascade scenarios. When EasyPost's cache expires simultaneously with nShift's rate limit reset, the resulting traffic spike hits carrier APIs that weren't designed for synchronized load increases. Multi-carrier platforms like Cargoson, ShipEngine, nShift, and EasyPost each implement different approaches to managing these scenarios, but none completely eliminate the underlying risk.

Consider this real-world sequence: DHL Express schedules routine maintenance on Monday mornings. Your system automatically increases load on UPS and FedEx to compensate. But UPS is simultaneously processing return-to-service traffic from their weekend batch processes. FedEx receives the combined load spike and starts throttling within minutes. Your entire shipping operation goes dark before you realize all three carriers hit limits simultaneously.

Early Warning Signal Detection

Cascade prevention starts with monitoring the right signals before rate limits trigger. Response time degradation precedes throttling by several minutes in most carrier implementations. While Datadog might catch your server metrics and New Relic monitors your application performance, neither understands why UPS suddenly started returning 500 errors for rate requests during peak shipping season, or why FedEx's API latency spiked precisely when your Black Friday labels needed processing.

Monitor these cascade warning signals across all carriers simultaneously:

Response time increases from 200ms to 500ms consistently indicate approaching rate limits. This pattern appears 3-5 minutes before carriers start returning 429 errors. Your monitoring needs carrier-specific baselines because DHL's 400ms might be normal while UPS 300ms signals problems.

Header analysis reveals carrier-specific throttling signals. FedEx includes proprietary headers showing remaining request capacity. UPS error codes indicate different throttling scenarios. DHL endpoint variations signal different classes of rate limit enforcement. Your monitoring must parse these carrier-specific indicators, not just HTTP status codes.

Effective monitoring requires carrier-specific alerting. Set up alerts that distinguish between different types of rate limit issues. Getting close to daily quotas requires different responses than hitting burst limits. We found that generic "high error rate" alerts miss the nuanced patterns of carrier throttling.

Sliding window rate limit detection tracks request patterns across multiple time periods. Monitor requests per minute, requests per hour, and requests per day simultaneously. Cascade scenarios develop when burst patterns overwhelm daily quotas even when minute-by-minute usage appears normal.

Cascade-Aware Monitoring Architecture

Your monitoring architecture needs fundamental changes to catch cascade scenarios before they develop. Standard monitoring tools treat each carrier API independently. That approach completely misses the interdependencies that create cascade failures.

Implement sliding window monitoring that tracks requests per carrier over multiple overlapping time periods. Five-minute, fifteen-minute, and hourly windows reveal different aspects of cascade development. A carrier might appear healthy on five-minute metrics while approaching daily quotas that will trigger throttling within the hour.

Multi-carrier platforms like Cargoson, EasyPost, nShift, and ShipEngine handle this complexity through abstraction layers. Vendor-agnostic monitoring becomes crucial when managing platforms like EasyPost, nShift, and Cargoson simultaneously. Our testing showed that platform-specific monitoring tools create blind spots when problems span multiple integrations.

Circuit breaker patterns require modification for multi-carrier environments. Circuit breaker patterns become essential in multi-carrier environments. Implement circuit breaker patterns to prevent cascading failures and improve system resilience under load. However, standard circuit breaker implementations need modification for carrier mixing—you need business logic that understands which carriers can substitute for specific shipping lanes.

Your circuit breakers need context awareness beyond simple failure rates. A 5% error rate on Sunday evening requires different responses than the same rate during Monday morning processing. Geographic routing capabilities matter: when DHL fails for Germany-to-Poland shipments, your system needs alternative carriers that actually serve that lane with comparable service levels.

Cross-carrier correlation detection identifies patterns that span multiple APIs. When authentication starts failing across carriers simultaneously, that indicates infrastructure problems rather than individual carrier issues. When response times increase across all carriers serving the same geographic region, that suggests broader network or regulatory problems.

Production Implementation Guide

Building cascade detection in production requires balancing sensitivity with false positive prevention. Too aggressive and you'll trigger failover during normal traffic variations. Too conservative and cascades develop before detection.

Start with service-specific routing based on real-time carrier health. Use DHL for rate estimates while routing actual label creation to FedEx and UPS during partial degradation scenarios. This approach maintains customer experience while reducing load on struggling carriers.

Alert thresholds need careful tuning for cascade detection. Traditional monitoring alerts on absolute thresholds: error rate above 5%, response time above 1000ms. Cascade detection requires relative thresholds: response time increased 50% from baseline, error rate doubled in the past 10 minutes, or request capacity dropped below 30% remaining.

Integration with TMS platforms creates additional complexity. Cargoson's approach stood out by maintaining separate rate limit pools per carrier while coordinating cross-carrier failover based on service capability rather than just availability. Your TMS integration needs similar carrier-aware intelligence.

Webhook delay monitoring becomes critical during cascade scenarios. Failed tracking updates generate support calls from worried customers. Each minute of carrier API downtime creates a cascade of operational overhead that multiplies across your entire shipping operation. When carriers start throttling, webhook delays increase before API calls start failing.

Implement automated degradation modes that activate during cascade detection. When your system detects approaching cascade conditions, automatically implement: reduced polling frequency for non-critical tracking updates, cached rate responses for repeat requests, manual rate lookup procedures for high-priority shipments, and customer communication templates explaining potential delays.

Testing Cascade Scenarios

Production-ready cascade detection requires testing with realistic failure scenarios. Sandbox environments rarely replicate the rate limiting behaviors that create cascade failures in production.

If DHL Express API failures spike on Mondays, investigate their system maintenance schedules. We tracked this pattern and found that predictable maintenance windows create cascading failures when adaptive algorithms don't account for carrier-specific scheduling patterns. Your test scenarios need similar predictable failure patterns.

Build test harnesses that simulate multi-carrier rate limiting under realistic traffic patterns. European shipping patterns differ significantly from North American volumes: 60% parcel traffic, 40% freight, with geographic distribution concentrated around major hubs in London, Frankfurt, and Amsterdam.

Load testing methodologies for cascade detection require coordinated failure injection across multiple carriers simultaneously. Test scenarios where UPS rate limits trigger 30 minutes after FedEx starts throttling. Verify that your monitoring catches these delayed cascade patterns before they exhaust all carrier options.

Validate authentication cascade scenarios by simulating token refresh failures under load. Most OAuth implementations break down when multiple instances attempt concurrent token refresh. Your tests need to verify that authentication monitoring catches these scenarios before they cascade across your entire shipping infrastructure.

Recovery and Mitigation Strategies

Cascade recovery requires different strategies than individual carrier failures. Traditional failover assumes alternative carriers remain available. Cascade scenarios exhaust alternatives faster than individual carrier recovery.

That 500ms threshold appears across multiple implementations, but here's what vendors don't tell you: when managing five carriers simultaneously, hitting that threshold means your entire shipping workflow grinds to a halt. Your recovery procedures need to account for this reality.

Implement conveyor-integrated systems with latency-aware failure detection. When API latency exceeds 750ms for more than 30 seconds, automated systems should switch to manual processing modes before conveyor timeouts trigger broader facility shutdowns.

Automated degradation modes need carrier-specific implementation. When cascade detection triggers, implement different responses for different carriers: reduce polling frequency for tracking updates, implement cached responses for repeat rate requests, activate manual lookup procedures for high-priority shipments, and communicate proactively with customers about potential delays.

Failover decision trees for high-volume operations require business logic that understands shipping lane coverage. If your primary carrier for Germany-to-Poland shipments hits rate limits during peak season, the system should automatically route requests to your secondary carrier for that lane while preserving service level requirements.

Build communication workflows that activate during cascade detection. Customers need proactive notification when multiple carrier failures affect their shipments. Your automated systems should send different messages for isolated carrier problems versus multi-carrier cascade scenarios.

The cascade detection systems you implement today determine whether your shipping operations survive the next major carrier reliability crisis. Traditional monitoring catches individual failures after they impact your customers. Cascade-aware monitoring catches the warning signs before your entire multi-carrier strategy collapses into 90 seconds of operational chaos.

Start by auditing your current rate limit exposure across all carrier integrations. Document carrier-specific failure patterns and historical cascade triggers. Then implement the monitoring architecture that catches these problems before they exhaust your shipping capacity.

Your customers won't wait 90 seconds for your shipping systems to recover. But cascade-aware monitoring gives you the 3-5 minute warning window needed to prevent total failure and maintain service even when multiple carriers fail simultaneously.