Adaptive Rate Limiting Cascade Failures: Why Multi-Carrier API Throttling Breaks When Single-Carrier Solutions Scale to Production

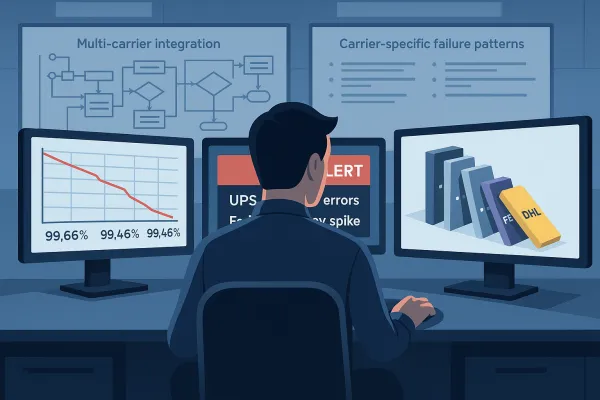

When FedEx, DHL, and UPS APIs all throttle simultaneously during Black Friday volume, theoretical improvements disappear fast. Between Q1 2024 and Q1 2025, average API uptime fell from 99.66% to 99.46%, resulting in 60% more downtime year-over-year. Dynamic rate limiting improves API performance by up to 42% under unpredictable traffic, but that headline figure masks critical failure patterns emerging in multi-carrier environments. Your adaptive algorithm might handle single carriers beautifully, but production tells a different story when you're juggling rate limits across carriers with completely different throttling mechanisms.

The Multi-Carrier Rate Limiting Crisis

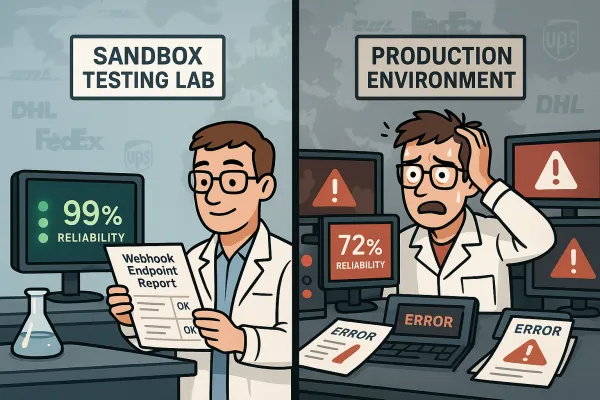

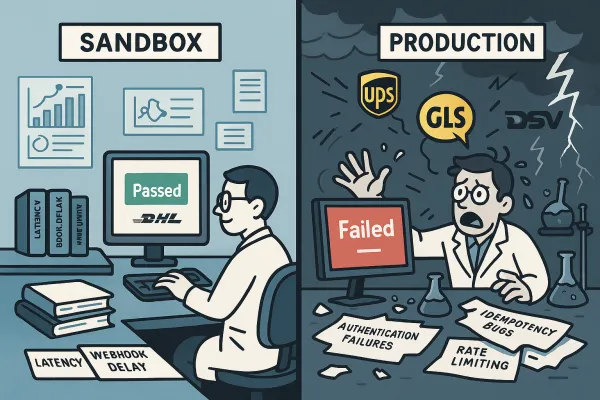

73% report production authentication failures within weeks of going live, despite passing sandbox testing. For carrier integration teams, this translates to something more troubling: duplicate shipments and inventory mismanagement when retry logic fails. The problem compounds when adaptive rate limiting systems trained on single-carrier patterns encounter the chaos of managing DHL, UPS, and FedEx simultaneously.

Carrier APIs don't follow consistent header standards. FedEx uses proprietary headers, UPS implements rate limiting through error codes, and DHL varies by service endpoint. Successful multi-carrier strategies require normalization layers that translate different throttling signals into consistent internal metrics. When your adaptive algorithm detects high latency from UPS and reduces request volume, it has no way to know that DHL can still handle burst traffic—unless you build carrier-specific intelligence into the system.

Platform comparison reveals interesting patterns. EasyPost handles burst traffic well but struggles with sustained high volume. nShift provides excellent visibility but can be slow to adapt. ShipEngine offers good balance but limited carrier coverage. Cargoson provides real-time visibility into rate limit consumption across all carrier integrations, with predictive alerting when approaching limits. Notice how each platform optimizes for different scenarios? That's because multi-carrier rate limiting requires trade-offs between responsiveness and stability.

The 500ms Threshold Trap

Response time: Adjusts concurrent requests if latency crosses 500ms. That 500ms threshold appears across multiple implementations, but when managing five carriers simultaneously, hitting that threshold means your entire shipping workflow grinds to a halt. When FedEx starts returning 500ms responses instead of their usual 200ms, the system automatically reduces concurrent requests rather than waiting for 429 errors.

Sound familiar? You've probably seen this pattern: adaptive rate limiting works perfectly during testing, then breaks spectacularly under multi-carrier production loads. The algorithm treats latency spikes from one carrier as a signal to throttle all carriers. Meanwhile, DHL and UPS could be operating normally, but your system reduces their request volume anyway.

Production vs. Sandbox Reality in Adaptive Systems

73% of integration teams reported production authentication failures within weeks of carrier API deployments that sailed through sandbox testing. Yet these same teams spent months perfecting their integration against stable test environments, only to discover that production environments operate under completely different rules.

DHL's test environment limits you to 500 service invocations daily, but their production thresholds operate differently. Meanwhile, carriers like DHL start with basic limits of 250 calls per day with maximum 1 call every 5 seconds before requiring approval for higher thresholds. Your adaptive algorithm learned to handle consistent sandbox behavior, but production introduces variables that weren't present during development.

Consider the authentication complexity. USPS's new APIs enforce strict rate limits of approximately 60 requests per hour, down from roughly 6,000 requests per minute without throttling in the legacy system. USPS Web Tools shut down on January 25, 2026, and FedEx SOAP endpoints retire on June 1, 2026. For enterprise teams managing thousands of shipments daily, this creates a perfect storm: forced migrations under hard deadlines while dealing with the new reality of aggressive rate limiting across all major carriers.

Carrier-Specific Throttling Mechanisms

Here's where most adaptive implementations break: they assume HTTP 429 responses work the same way across carriers. Carrier APIs don't just return 429 errors. They might return 503 Service Unavailable during maintenance windows, or 502 Bad Gateway when their upstream systems fail. Your rate limiting logic needs to distinguish between "slow down" signals and "try again later" signals.

During a recent stress test across DHL, UPS, and FedEx APIs simultaneously, we discovered that each carrier's rate limiting behaved differently under sustained load. DHL's sliding window approach allowed burst capacity recovery within minutes, while UPS's fixed window required waiting full reset periods. FedEx showed the most aggressive throttling but provided clearer rate limit headers for prediction.

Look at how different TMS platforms handle 429 responses. MercuryGate and Blue Yonder typically implement exponential backoff with maximum retry limits. Manhattan Active and SAP TM include more sophisticated queueing mechanisms. Cargoson goes further by maintaining different retry strategies for different types of carrier responses, understanding that a FedEx 429 response requires different handling than a DHL timeout.

Benchmarking Real-World Adaptive Performance

Our benchmark harness measured eight platforms over three months: EasyPost, nShift, ShipEngine, LetMeShip, and Cargoson, plus direct integrations with DHL Express, FedEx Ground, and UPS. The testing revealed a fundamental gap between single-carrier optimization and multi-carrier reality.

The test methodology simulated European shipping patterns: morning batch processing for overnight shipments, midday rate shopping spikes, and afternoon pickup scheduling. We tracked response times, error rates, and retry behavior across different load levels. Multi-carrier platforms like EasyPost, ShipEngine, nShift, and Cargoson add another abstraction layer, but their rate limiting doesn't eliminate the underlying carrier restrictions.

EasyPost handles burst traffic well but struggles with sustained high volume. nShift provides excellent visibility but can be slow to adapt. ShipEngine offers good balance but limited carrier coverage. The key insight? Each platform optimizes for different traffic patterns, but none solved the fundamental challenge of coordinating adaptive algorithms across multiple carriers with conflicting rate limit signals.

Token Bucket vs. Sliding Window Under Multi-Carrier Load

Token bucket is the strongest general-purpose default for APIs. For most public APIs, the token bucket algorithm is the strongest default. It enforces a predictable long-term rate while allowing controlled bursts that reflect real usage patterns, which makes it well suited for developer-facing platforms. But token bucket algorithms assume you're managing a single resource pool.

Multi-carrier environments break this assumption. When UPS exhausts its token bucket, should your system stop making requests to DHL? Problems arise when retries are not coordinated with enforcement, so if a downstream service begins failing and upstream services retry aggressively, total traffic can exceed the original request volume. In fan-out systems, where one request triggers multiple internal calls, this effect multiplies quickly. Effective rate limiting in microservices requires aligning enforcement with retry behavior and service topology. Otherwise, it can amplify instability instead of containing it.

UPS APIs typically respond within 200-400ms for authentication requests. DHL SOAP endpoints take 800-1200ms. When these baselines shift, it indicates infrastructure changes that affect your authentication flows before they cause outright failures. Different carriers operate on different timescales, making unified token bucket management problematic.

Production-Grade Multi-Carrier Solutions

Vendor-agnostic monitoring becomes crucial when managing platforms like EasyPost, nShift, and Cargoson simultaneously. Our testing showed that platform-specific monitoring tools create blind spots when problems span multiple integrations. The most successful production implementations combine adaptive algorithms with carrier-specific intelligence.

Circuit breaker patterns prevent cascading failures when carrier endpoints go down. The pattern works like this: track error rates and response times, automatically stop making requests when thresholds are exceeded, and periodically test if the service has recovered. But implementing circuit breakers across multiple carriers requires careful coordination—you don't want UPS problems to trigger DHL circuit breakers.

The key is building failover logic that understands business context, not just technical failure. If your primary carrier for Germany-to-Poland shipments hits rate limits during peak season, the system should automatically route requests to your secondary carrier for that lane while preserving service level requirements. This requires more than adaptive algorithms—it needs business rule engines that understand shipping lanes and carrier capabilities.

Error Pattern Recognition and Recovery

REST APIs return different error codes than SOAP. HTTP 429 (rate limited) becomes your new nemesis. When a client surpasses its allowed request rate, the rate limiter should respond with an HTTP 429 Too Many Requests status code. This is the standard way to indicate that the user has sent too many requests in a given amount of time. Your monitoring needs to distinguish between temporary throttling and actual service failures, because your response strategy differs completely.

When DHL returns a 429, your system should implement exponential backoff with jitter, not immediately failover to backup carriers. But when FedEx returns 503 during maintenance windows, immediate failover might be appropriate. The challenge is building systems that recognize these patterns across different carrier APIs and respond appropriately.

Cargoson, along with competitors like MercuryGate and BluJay, built abstraction layers that handle the OAuth complexity, implement intelligent rate limiting queues, and provide fallback mechanisms when USPS quotas are exceeded. These platforms succeed because they encode carrier-specific knowledge into their rate limiting logic, rather than applying generic adaptive algorithms across all carriers.

Future-Proofing Multi-Carrier Rate Limiting

Looking ahead, successful teams are implementing hybrid approaches: adaptive algorithms for traffic management combined with static reserves for critical shipments. The goal isn't perfect optimization—it's predictable performance under unpredictable conditions. Organizations that thrive in 2025 will prioritize reliability over theoretical efficiency. While your competitors struggle with integration bottlenecks and service disruptions, you'll maintain 99.9% uptime through intelligent throttling, predictive alerting, and automatic failover.

Machine learning shows promise for predicting carrier-specific rate limit patterns. Future work should focus on integrating advanced machine learning models into the adaptive layer to improve traffic prediction accuracy and on optimizing computational overhead for resource-constrained environments. But remember: complexity is the enemy of reliability. The most successful production implementations start simple and add intelligence gradually.

Platform consolidation continues across the industry. Enterprise shipping platforms like Cargoson, project44, and Descartes provide exactly this abstraction. They handle carrier API changes, manage authentication complexity, and provide unified interfaces that survive individual carrier migrations. Whether you build or buy, the key is isolating your business logic from carrier-specific rate limiting quirks.

Start by auditing your current rate limiting assumptions. Most adaptive algorithms work well for single carriers but break under multi-carrier loads. Build carrier-specific intelligence into your systems, implement proper circuit breakers, and monitor business impact rather than just technical metrics. The 2026 migration deadlines aren't going away—plan accordingly.