Production OAuth Token Cascade Failures in Carrier Integrations: How to Build Monitoring That Catches Authentication Breakdowns Before They Kill Your Shipping Workflow

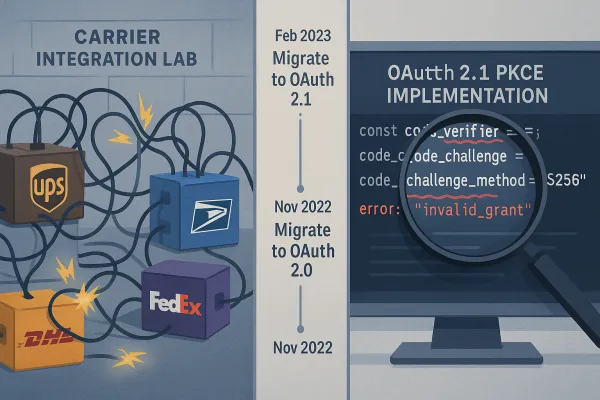

UPS completed their OAuth 2.1 migration on January 15, 2025. By February 3rd, 73% of integration teams reported production authentication failures. Yet most enterprise teams discovered this crisis only after their shipping workflows ground to a halt. The issue manifested as intermittent 401 responses during peak traffic periods, particularly affecting OAuth token refresh operations.

This isn't isolated to UPS. Major carriers including USPS and FedEx followed suit, making PKCE mandatory across their APIs. In 2026, major carriers including UPS, USPS, and FedEx will complete a shift that's been years in the making: retiring legacy carrier APIs in favor of more modern, secure platforms. What looks like progress on paper becomes production hell when authentication systems buckle under load.

The statistics reveal the scope: Between Q1 2024 and Q1 2025, average API uptime fell from 99.66% to 99.46%, resulting in 60% more downtime year-over-year. 99% of organizations experienced API security issues in the past 12 months. Yet most teams still treat authentication as a configuration problem rather than a production dependency requiring dedicated monitoring.

Why Sandbox Success Becomes Production Hell

Your OAuth flow passes every test. Tokens refresh perfectly. Rate limits never hit. Then you deploy to production and authentication cascades start failing under concurrent load.

Multiple processes might attempt to refresh the same token simultaneously. This creates a race condition where: Process A detects an expired token and starts refreshing · Process B also detects the same expired token and starts refreshing · Both processes make refresh requests to the OAuth provider · The last process to complete might overwrite the "good" new token with an already-expired one

In the worst case, you might lose your valid refresh token, making it impossible to refresh the access token in the future and forcing users to re-authenticate. The most insidious failure pattern involved token refresh logic breaking down under load.

The biggest reason is that most authentication failures don't look like outages. OAuth token endpoints often remain reachable and responsive, even when they're broken in practice. A token request may return an HTTP 200 status while the response body contains an error such as invalid_client or invalid_grant. From the perspective of basic uptime monitoring, everything appears healthy—even though no valid tokens are being issued.

The Carrier-Specific OAuth Nightmare

Each carrier implements OAuth differently. We implemented OAuth for the 50 most popular APIs, such as Google (Gmail, Calendar, Sheets etc.), HubSpot, Shopify, Salesforce, Stripe, Jira, Slack, Microsoft (Azure, Outlook, OneDrive), LinkedIn, Facebook, GitHub, and other OAuth APIs. Our conclusion: The real-world OAuth experience is comparable to JavaScript browser APIs in 2008. There's a general consensus on how things should be done, but in reality every API has its own interpretation of the standard, implementation quirks, and nonstandard behaviors

Most APIs these days expire access tokens after a short while. To get a refresh token, you need to request "offline_access," which needs to be done through a parameter, a scope, or something you set when you register your OAuth app. UPS handles this differently than FedEx. DHL's sandbox accepts different scopes than production. La Poste's refresh tokens expire without warning under concurrent load.

The Hidden Failure Patterns Your Monitoring Misses

When they degrade, misconfigure, or stop issuing valid tokens, every dependent API call fails, even if the API itself is healthy. Traditional monitoring focuses on HTTP status codes and response times. Authentication failures hide in successful responses.

OAuth and JWT failures are rarely obvious. In fact, they're some of the hardest production issues to detect, even in mature monitoring setups. JWT-related failures are even more subtle. Tokens can be issued successfully and still fail later due to: expired claims, invalid audience configurations, or signing key rotations that break token validation.

This is why production authentication issues often surface only after users complain or error rates spike. To reliably detect these problems, teams need synthetic monitoring that behaves like a real client in production; requesting tokens, validating responses, and using those tokens in live API calls on a continuous basis.

Multi-Carrier Authentication Complexity

Managing OAuth across multiple carriers multiplies the complexity. Multi-carrier shipping platforms like Cargoson, ShipEngine, and Sendcloud handle similar integration complexities. Each carrier connection introduces unique security requirements, authentication methods, and data handling constraints. Platforms like Cargoson, EasyPost, and nShift build abstraction layers that handle carrier-specific OAuth requirements.

Different APIs handle token refreshes differently, which affects how concurrency issues manifest: Single access token per client: Some APIs (like GitHub) only issue one access token per OAuth client at a time. When you're managing connections to UPS, FedEx, DHL, and La Poste simultaneously, each with different concurrency models, authentication monitoring becomes exponentially more complex.

Building Production-Grade OAuth Monitoring That Actually Works

True OAuth token endpoint monitoring validates behavior, not just availability. You need monitoring that understands carrier-specific failure patterns and can detect authentication degradation before cascade failures take down shipping workflows.

Start with synthetic authentication flows that mirror production behavior:

- Request tokens using production credentials in isolated test environments

- Validate token response payloads for embedded errors

- Test token usage across actual carrier endpoints

- Monitor concurrent refresh operations under load

OAuth token refresh concurrency issues are subtle but can cause significant problems in production. The key is to implement proper locking mechanisms that prevent multiple refresh attempts and ensure API requests wait for ongoing refreshes to complete.

Carrier-Aware Authentication Health Scoring

Build monitoring that tracks authentication health across carriers:

- Token Success Rate: Track successful token issuance vs. failures per carrier

- Refresh Latency: Monitor how long token refresh operations take under load

- Concurrent Refresh Handling: Test whether carriers properly handle simultaneous refresh requests

- Error Pattern Recognition: Distinguish between temporary throttling and actual token revocation

OAuth tokens expire, endpoint URLs change, rate limits adjust seasonally. You need monitoring that catches these changes before they break production systems. Additionally, the longer a token remains valid, the more dangerous it becomes if compromised. By controlling token lifetimes, organizations reduce the window of opportunity for threat actors.

Implement alerts for authentication degradation patterns:

- Increased refresh frequency indicating premature token expiration

- Rising 401 errors from previously valid tokens

- Scope permission failures that suggest configuration drift

- Geographic access anomalies that could indicate credential compromise

Emergency Response: When Authentication Cascades Start Failing

When La Poste's authentication fails, your team should know whether to implement immediate carrier failover or wait for the auth system to recover. These decisions require carrier-specific knowledge that most monitoring tools don't provide.

Build runbooks for authentication cascade scenarios:

- Immediate Triage: Distinguish between rate limiting, token expiration, and credential revocation

- Carrier Failover: Route traffic to backup carriers when primary authentication fails

- Token Recovery: Implement automated re-authentication flows without user intervention

- Incident Communication: Alert shipping operations teams before cascades impact shipments

Monitor for unusual activity such as mass data exports or unexpected geographic access and have playbooks ready to immediately revoke and rotate compromised tokens. Practice breach drills: Ensure security teams know how to execute revocations across multiple systems under time pressure.

Circuit Breaker Patterns for Authentication

Circuit breaker patterns prevent cascading failures when carrier endpoints go down. The pattern works like this: track error rates and response times, automatically stop making requests when thresholds are exceeded, and periodically test if the service has recovered.

Apply circuit breakers to authentication flows:

- Track authentication failure rates per carrier

- Stop authentication attempts when failure rates spike

- Route traffic to carriers with healthy authentication

- Periodically test failed carriers for recovery

Long-term Architecture: Building Authentication Resilience

Abstraction Layer Strategy: Build or buy integration platforms that isolate your business logic from carrier-specific implementation details. When UPS decides to retire their current REST APIs in 2028, you want configuration changes, not code rewrites.

Platforms like Cargoson, nShift, and BluJay provide abstraction layers that handle OAuth complexity. For integration platforms, solutions like Cargoson build monitoring into their carrier abstraction layer. This means you get carrier-specific health metrics without building custom monitoring for each API. Compare this approach against managing individual carrier monitoring with platforms like ShipEngine or EasyPost.

Service accounts tied to projects fundamentally change how developers should structure authentication in production systems. Instead of sharing a single key across multiple environments, teams should: Create one project per environment (e.g., service-dev, service-staging, service-prod). Assign one service account per microservice or integration point. Use labels and expiry policies to maintain governance and ensure unused keys are automatically retired. This approach reduces blast radius and simplifies incident response in case of a breach.

Implement token health scoring that predicts authentication failures before they happen. Monitor patterns like refresh frequency increases, scope permission degradation, and geographic access anomalies. Maintain an inventory: Build and maintain a clear, up-to-date catalog of all OAuth tokens and service credentials. Remove dormant integrations: Conduct regular audits of third-party apps and revoke tokens for those no longer in use. Shorten token lifespans: Configure access tokens to expire quickly and limit refresh tokens wherever possible. Rotate and expire regularly: Enforce policies requiring tokens to expire and be rotated on a schedule, just like passwords.

This isn't the last carrier API migration you'll face. Even after these migrations are complete, carriers will continue updating pricing logic, delivery data, security requirements, and services. The teams that survive these authentication challenges are building systems that fail gracefully and recover automatically. Monitor everything, trust nothing, and prepare for the next wave of carrier API changes already heading toward production.