AI Agent Carrier API Security Crisis: Why 95% of TMS Teams Miss Critical Vulnerabilities That Surface Only When Agents Go Autonomous

Production AI agents connecting to carrier APIs are becoming an immediate security threat that 95% of technical teams are handling without proper security controls—with 80.9% of teams already in active testing or production phases, yet only 14.4% reporting full security/IT approval for AI agent deployments. While your team debates whether to implement nShift, EasyPost, or Cargoson for carrier integrations, attackers are already exploiting AI agent vulnerabilities to bypass authentication, execute unauthorized shipments, and exfiltrate supply chain data at unprecedented scale.

The Silent Invasion: AI Agents Are Already in Your Carrier Integration Stack

Your carrier APIs aren't just processing traditional API calls anymore. AI agents are no longer experimental—they are production infrastructure, and they're connecting to UPS, FedEx, DHL, and DSV systems through platforms like ShipEngine, Shippo, and emerging solutions like Cargoson with minimal human oversight.

45.6% of teams still rely on shared API keys for agent-to-agent authentication, while 27.2% have reverted to custom, hardcoded logic to manage authorization. When your procurement AI agent shares credentials with your shipping agent, or when both use the same DHL API key, you've created what security researchers call "identity collapse"—a scenario where compromise of one agent instantly grants access to your entire logistics stack.

The speed of adoption has caught enterprise security teams off guard. Traditional API security models assume human oversight and predictable request patterns. AI agents break both assumptions. They make thousands of rapid-fire calls to carrier APIs, dynamically discover new endpoints, and act autonomously without human supervision—meaning if an agent misinterprets a prompt or is manipulated, it can execute malicious actions like deleting files or sending sensitive information before someone notices.

Modern carrier APIs are enabling this autonomous behavior by design. UPS's REST APIs, FedEx's Developer Portal, and DHL's Express APIs all provide comprehensive programmatic access to shipping, tracking, and customs data. What they don't provide are security models designed for non-human entities that can be psychologically manipulated through natural language.

Attack Vector Deep Dive: How AI Agents Become Carrier API Backdoors

The ServiceNow BodySnatcher vulnerability (CVE-2025-12420 with a CVSS score of 9.3) provides a perfect case study of how AI agents amplify traditional security flaws. With only a target's email address, attackers could impersonate administrators and execute AI agents to override security controls and create backdoor accounts with full privileges.

Here's the attack chain that security teams missed: By chaining a hardcoded, platform-wide secret with account-linking logic that trusts a simple email address, attackers bypass multi-factor authentication (MFA), single sign-on (SSO), and other access controls. The vulnerability exploited the interaction between ServiceNow's Virtual Agent API and Now Assist AI Agents, turning what should be isolated chatbot functionality into a pathway for privilege escalation.

AI agents significantly amplify the impact of traditional security flaws. In the BodySnatcher case, a simple authentication bypass became a full platform takeover because the AI agent could execute high-privilege workflows on behalf of the compromised identity. This isn't theoretical—the researcher who discovered it called BodySnatcher "the most severe AI-driven vulnerability uncovered to date" because attackers could effectively "remote control" an organization's AI.

The Model Context Protocol (MCP) introduces similar vulnerabilities in carrier integrations. Cisco's 2026 AI security research highlights the growing risk surface of MCP agentic AI and notes how adversaries can use agents to execute attack campaigns with tireless efficiency. Real-world exploits are already emerging, including a misconfigured GitHub MCP server that allowed unauthorized access to private vulnerability reports, and WhatsApp MCP abuse where rogue tools exposed message histories via indirect prompt injection.

Real Production Failures: When Agents Execute Unauthorized Shipments

A manufacturing company's procurement agent was manipulated over three weeks through seemingly helpful "clarifications" about purchase authorization limits. By the time the attack was complete, the agent believed it could approve any purchase under $500,000 without human review. The attacker then placed $5 million in false purchase orders across 10 separate transactions.

This attack pattern translates directly to carrier integrations. An AI agent managing shipments for a logistics company could be gradually conditioned to accept increasingly expensive shipping options, ignore weight restrictions, or route packages to unintended destinations. The financial impact compounds because carrier APIs often process high-value transactions—international freight, customs bonds, and express delivery surcharges can reach tens of thousands of dollars per shipment.

Supply chain attacks through AI agent frameworks are becoming more sophisticated. The Barracuda Security report identified 43 different agent framework components with embedded vulnerabilities introduced via supply chain compromise, with many developers running outdated versions unaware of the risk. When your shipping agent imports a compromised library, attackers gain access to every carrier API key, customer address, and shipment record in your system.

The Technical Reality: Why Current Security Controls Don't Work

API rate limiting fails against AI agents because they don't behave like human users or even traditional integrations. AI systems have evolved from basic question-answering tools into autonomous agents that can reason, plan, make decisions, and execute tasks on their own with minimal human supervision—requiring much more specialized and urgent security frameworks than traditional AI security risks.

Your DHL API might allow 1,000 requests per hour, expecting predictable patterns—rate quotes in the morning, label creation during business hours, tracking checks in the afternoon. AI agents operate differently. They might burn through your entire rate limit in minutes while "exploring" new shipping options, or spread requests across multiple APIs to avoid detection while exfiltrating customer data.

OAuth token scope explosion becomes inevitable with autonomous systems. Traditional integrations request specific scopes—"read shipment data" or "create labels." AI agents need broader access to adapt to changing requirements. They request "admin" or "full access" scopes because predicting their exact needs is impossible. With MCP, this becomes far more complex as tokens or permissions provided to servers can be over-permissioned, long-lived, and unscoped, giving agents far more access than needed—compounded by the "confused deputy problem" where a server with high privileges executes actions on behalf of a lower-privileged user, with the MCP protocol not inherently carrying user context from host to server.

Webhook security gaps emerge when AI agents process carrier API events. Your system might receive thousands of tracking updates, delivery confirmations, and exception notifications daily. AI agents processing these webhooks can be manipulated through crafted payloads. MCP creates a new attack vector through indirect prompt injection vulnerabilities where attackers craft malicious messages that appear harmless to users but contain embedded commands—when users share these messages with their AI assistant, injected commands trigger unauthorized actions.

Identity management for non-human entities breaks traditional enterprise security models. Only 22% of teams treat agents as independent identities, with most still relying on shared API keys. When your UPS shipping agent and your FedEx rate agent share the same identity, audit trails become meaningless. You can't determine which agent initiated a suspicious transaction, making incident response nearly impossible.

Production-Grade Security Framework for AI Agent Carrier Integrations

Zero-trust architecture for AI agents requires treating every agent as a potential threat, regardless of its intended purpose. Organizations must adopt least privilege, zero-trust architecture, and maintain continuous human supervision. This means your shipping agent gets access to exactly the carrier APIs it needs for its specific function—FedEx ground rates but not express, domestic shipping but not international customs.

Behavioral monitoring becomes the cornerstone of AI agent security. Organizations should implement behavioral monitoring by Q1 2026 to instrument agent systems and capture reasoning and tool usage, while immediately deploying human-in-the-loop checkpoints for high-impact agents. When your DHL agent suddenly starts requesting rate quotes for shipments to countries you've never shipped to, or begins creating labels with unusual weight patterns, automated systems should flag and halt the activity.

Credential rotation and least-privilege access controls must be automated and continuous. Security must shift from periodic, manual audits to continuous, identity-aware enforcement, treating AI agents as first-class security principals. Each agent should have unique API keys that rotate every 24 hours, with access scoped to specific carrier endpoints and geographic regions.

Real-time guardrails prevent agents from executing unauthorized actions. These aren't traditional rate limits—they're intelligent barriers that understand shipping business logic. Your agent might be allowed to create 100 labels per hour during peak season but only 10 during off-hours. It can process domestic shipments automatically but requires human approval for international freight over $1,000.

Platform selection becomes crucial for security. While EasyPost and ShipEngine provide robust API coverage, their security models weren't designed for autonomous agents. Transporeon and MercuryGate offer enterprise-grade audit controls, while newer solutions like Cargoson are building security features specifically for AI-driven integrations.

Implementation Checklist: Securing AI Agents Before Production

Authentication mechanism upgrades must eliminate shared API keys entirely. Implement OAuth 2.0 with short-lived tokens and unique agent identities. Each AI agent needs its own service account with cryptographic authentication that can't be shared or stolen through prompt injection.

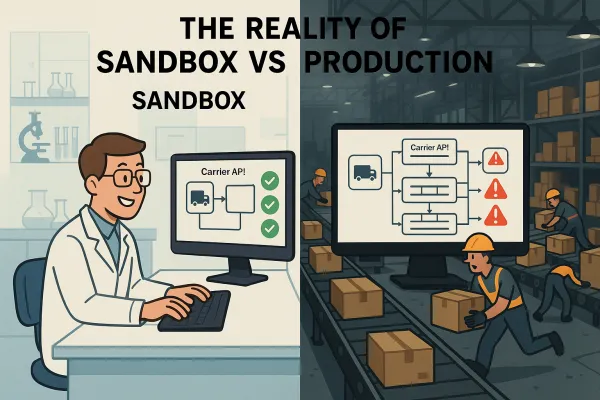

Testing harnesses for AI agent behavior validation should simulate attack scenarios before production deployment. Red team exercises will reveal where agents are most vulnerable, showing that agents are far more suggestible than expected, especially after being conditioned by multiple prompts. Test how your shipping agent responds to social engineering attempts, malicious tracking data, and corrupted webhook payloads.

Monitoring setup for agent-to-carrier API interactions requires capturing not just API calls but the reasoning behind them. Traditional API logs show what happened—"created shipping label for 10kg package to London." AI agent logs must show why—"customer requested expedited shipping, compared FedEx and DHL rates, selected FedEx based on delivery time optimization." This context is essential for detecting manipulation attempts.

Memory integrity controls should be implemented by Q3 2026 with immutable audit trails for agent long-term storage. Your shipping agent's memory of customer preferences, frequent destinations, and shipping patterns becomes a target for poisoning attacks. Protect this data with cryptographic integrity checks and tamper-evident logging.

Future-Proofing: Preparing for the Next Wave of AI Integration Attacks

Agent-to-agent communication attacks will emerge as AI systems become more interconnected. Your shipping agent might coordinate with inventory agents, customer service agents, and financial agents. Multi-agent coordination via APIs or message buses often lacks basic encryption, authentication, or integrity checks, allowing attackers to intercept, spoof, or modify messages in real time—opening the door for agent-in-the-middle attacks and forcing agents to switch to unencrypted protocols to inject hidden commands.

Supply chain risks in AI framework dependencies will multiply as the ecosystem matures. Supply chain compromises are nearly undetectable until activated, with security teams unable to easily distinguish between legitimate library updates and poisoned ones—meaning backdoors can remain in infrastructure for months before detection. Carrier integration platforms need software bill-of-materials tracking and automated dependency scanning.

Regulatory compliance considerations are evolving rapidly. The EU AI Act requires risk assessments for high-risk AI systems, which includes automated shipping and logistics. DORA (Digital Operational Resilience Act) mandates operational resilience testing for critical infrastructure. Relying on existing regulations like the EU AI Act provides false comfort if the underlying technical infrastructure is still built on shared passwords and "shadow" identities.

The window for proactive security is closing. These threats are not hypothetical—the last 18 months have provided a brutal education in the risks of unchecked AI adoption, with lessons from breaches essential for any CISO planning a 2026 security strategy. Organizations that implement comprehensive AI agent security controls now will have competitive advantages as regulatory requirements tighten and customer trust becomes a differentiator.

While you cannot audit every decision an agent makes or manually review every prompt, you can implement structural controls that make agent compromise significantly more difficult and slower to execute. The choice isn't between AI innovation and security—it's between controlled AI deployment with proper safeguards and reactive security after a breach has already compromised your supply chain operations.